Anthropic Deepens Google and Broadcom Ties as the AI Compute Race Accelerates

Anthropic is making a bigger bet on compute. On April 6, 2026, the company said it will expand its use of Google Cloud and Google-built TPUs supplied through Broadcom, adding multiple gigawatts of capacity expected to come online starting in 2027.

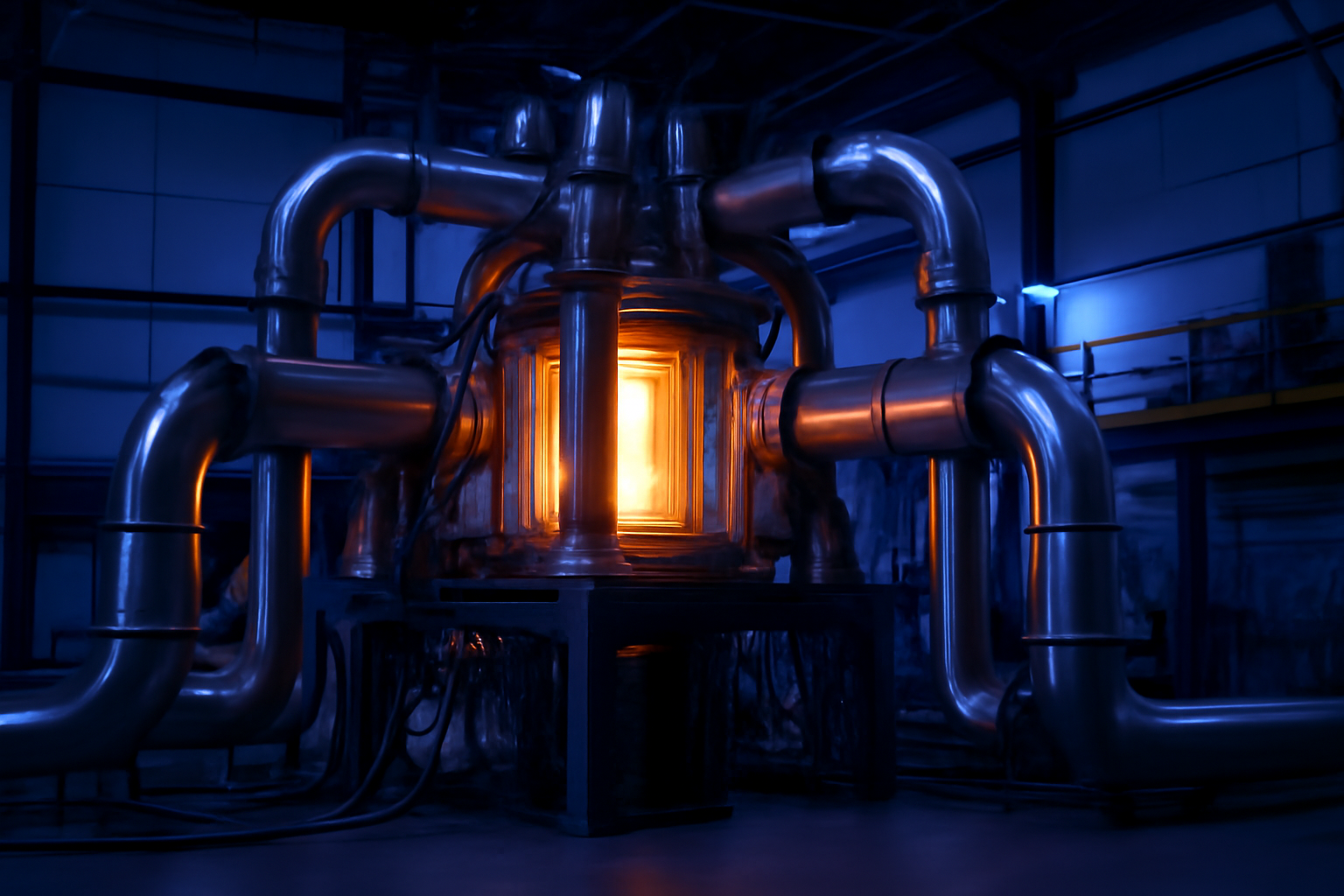

The move is a reminder that the generative AI market is no longer only about model launches and chatbot features. It is also a contest over power, chips, and cloud access — the infrastructure required to train and run increasingly demanding AI systems.

What Anthropic Announced

Anthropic said the expansion will support its foundation models, agents, and enterprise applications. The company said the added capacity will come through Google Cloud services and access to Google-built TPUs supplied through Broadcom.

According to the announcement, the expansion will begin coming online in 2027. Anthropic also said it continues to use Google Cloud services such as BigQuery, Cloud Run, and AlloyDB to support its data, AI development, and applications.

- Anthropic announced the expansion on April 6, 2026.

- The deal adds multiple gigawatts of TPU capacity.

- Capacity is expected to begin coming online in 2027.

- The company framed the move around models, agents, and enterprise use cases.

Why the Deal Matters

The announcement underscores how much compute has become a strategic advantage in generative AI. The largest model developers need vast and reliable access to chips and cloud infrastructure to keep pace with training demands and inference traffic.

For Anthropic, the expansion suggests confidence that demand for Claude and related enterprise offerings will continue to grow. For Google and Broadcom, it highlights the commercial value of becoming core suppliers to frontier AI developers.

Beyond Chatbots

Anthropic’s language in the release points to a broader shift in the market. The company is not just scaling consumer-facing assistants. It is also building the infrastructure to support agents and business workflows, which increasingly matter as enterprises look for AI systems that can do more than generate text.

That makes the agreement relevant beyond one company. It reflects a wider industry pattern in which generative AI leaders are racing to secure long-term compute commitments before demand outstrips supply again.

What It Signals for the Industry

The deal arrives as investors and customers alike continue to focus on whether AI firms can turn rapid adoption into durable revenue. Big infrastructure commitments can help answer that question, but they also raise the bar on execution and capital intensity.

If Anthropic’s scale-up works as planned, the company will have more room to train bigger models, support more enterprise customers, and expand its agentic offerings. But the competitive pressure from rivals with their own chip and cloud strategies is unlikely to ease.

What to Watch

The next key milestone is whether the promised TPU capacity begins arriving on schedule in 2027. It will also be worth watching whether Anthropic uses the added infrastructure to launch new model tiers, more capable agents, or expanded enterprise products.

In the broader market, the bigger question is whether other AI companies respond with similar long-term compute deals, potentially locking the generative AI race even more tightly to the semiconductor and cloud sectors.

Source Reference

Primary source: Google Cloud / PR Newswire

Source date: 2026-04-06T18:00:00Z

Reference: Read original source

Leave A Comment